Descartes' Bootstraps

Will AI "think" itself into existence?

René Descartes (1596-1650) is famously credited with the postulate "cogito, ergo sum" ("I think, therefore I am") but his reasoning was actually more along the lines of “I can’t doubt my existence, therefore I can be sure that I am.” This strongly parallels the issues that arise in the study of consciousness in humans, other animals, and non-biological machines. For the typical person, the granting of consciousness to some “other” may be subject to doubt, but one’s own consciousness (however subject to quirks and limitations) is not.

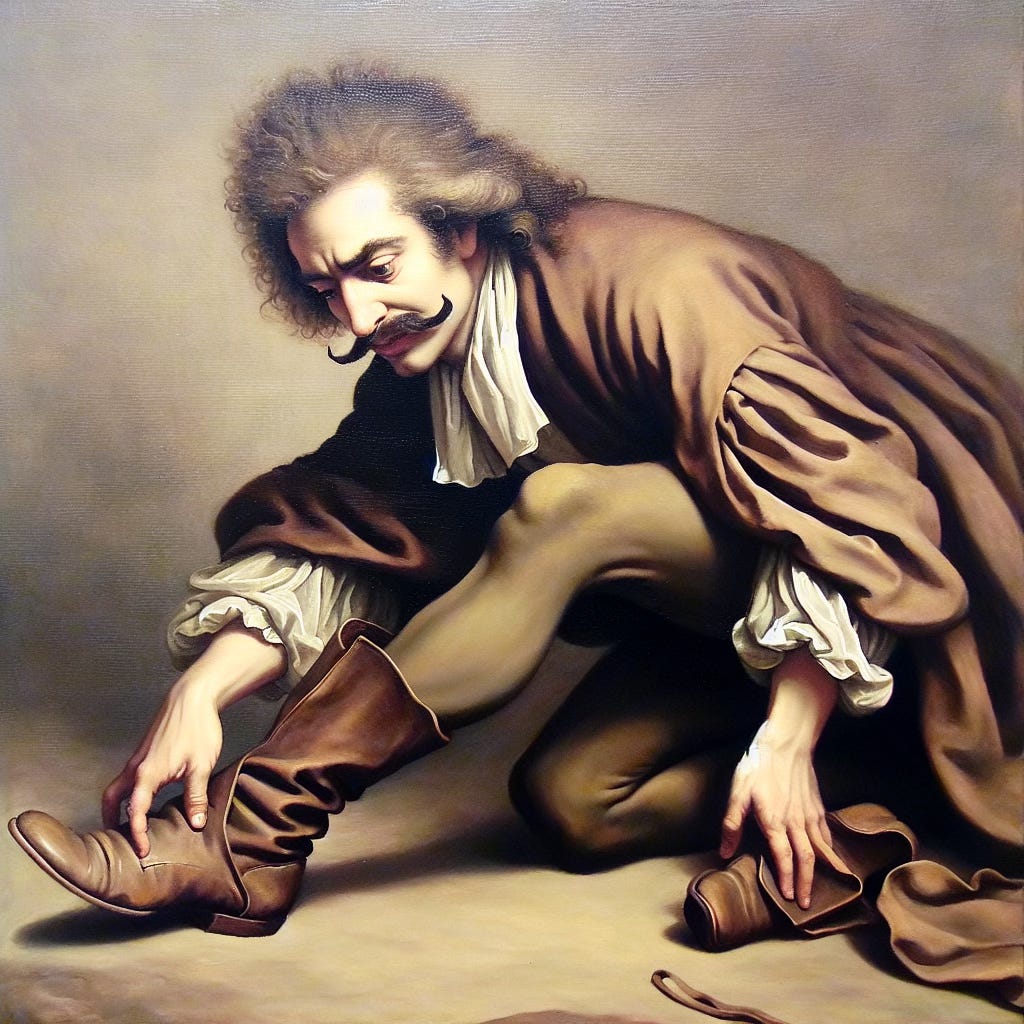

Let us suppose that Descartes was out riding in the French countryside with his friends (all men) and their party has returned to enjoy some drinks and conversations in the salon. At some point in escalating bravado, Descartes announces that not only is he smart, but he is also so physically strong that he can lift every man in the room off the floor by that man’s bootstraps. Marin Mersenne (a logician friend) quickly told Descartes the bet was accepted and to proceed. With some considerable effort, Descartes then did go around to the men in the room and was able, at least to some extent to lift each by his bootstraps. In triumph, Descartes turned to Mersenne for payment, but Mersenne reminded Descartes that one man was still firmly standing on the floor, Descartes himself. Of course, no matter how strong he was or how hard he tried, Descartes was unable to lift himself off the floor by his own bootstraps, because he was standing in his own boots.

In his Meditations, Descartes refers to Archimedes needing a place to stand in order to lift the world. For Descartes, the mental lifting was through doubt, and the place to stand is his own inability to doubt his own existence. For the study of consciousness, we each start standing in our own boots (minds) from which we may project, or fail to project, “what it is like” to have our conscious experience onto someone or something, “other” than ourselves. What if we reach down to pull ourselves up by our mental bootstraps, and find them illusions, i.e., not even there?

I. Bootstrapping Consciousness

A. Descartes' Dilemma: Standing in His Own Boots

As mentioned above, Descartes did not actually use his ability to think to establish his existence, but rather, his inability to doubt his existence. This has an unfortunate logical circularity because it argues from what you can’t do, which you also can’t do when you don’t exist (such as being dead, which Descartes now is.) That also has the ring of the fallacy of arguing from what you don’t know (Argumentum Ignorantiam). Inability to doubt could come from literally not having he mental capability of forming the doubt (asking the question) or more along the lines of what Descartes probably meant, not being able to logically support the answer to the doubt in the affirmative. In other words, Descartes could not support the proposition in his mind that he did not exist. I suspect he still can’t even though he no longer exists. We all have difficulty with the concept of death because one needs to be alive to feel what anything is “like,” and it is not like anything not to exist.

Descartes considered the possibility that world he was in did not exist, but rather that he was being deceived by an “evil demon” who was faking the sensory information received by Descartes’ brain. This was quite an intellectual leap (although rooted in Plato’s Cave) which I will refer to as “VR-Descartes” because it is as if Descartes were resurrected and put in a full VR suit to experience a synthetic world. (I could have gone with “Matrix-Descartes” but VR is easier for most to visualize.

The interface to the world, or synthetic world, does not impact the problem of the circularity of trying to understand one’s own consciousness from the internal standpoint. We can at best look at others and extrapolate within. That is Dan Dennett’s position of heterophenomenology.

However, Descartes’ problem of his own mind (what he called “I”) is deeper than he suspected. I am influenced by the books of Oliver Sacks, especially "The Man Who Mistook His Wife for a Hat" and "An Anthropologist on Mars" where he calls upon us to consider that the "normal" mind is operating in a construct world made out of sensory data by "lower" brain systems (Descartes' "evil demons") that can go wrong (or just produce a different result). We can all experience the deception when we look at optical illusions and know perfectly well that what we are seeing is not what is happening in the world outside ourselves.

Oliver Sacks might ask us, what if Descartes suffered from Dissociative Identity Disorder? What is the "I" refer to in the writings from this DID-Descartes? Each personality could certainly doubt the true existence of each other personality, all supported by the same mid-brain processes generating the "feeling of being." Ned Block's functionalism suggests mental states are defined by causal roles. DID clearly demonstrates multiple causal roles coexisting within a single biological substrate, something Cartesian dualism struggles to explain.

We could also consider the case in which Descartes had to have his corpus callosum divided and became split-brain (SB-Descartes). Each side, although truly having physical presence, could then doubt the other. Where then is the undoubtable "I"?

Or, Descartes could have dropped acid, and experienced doubt on steroids, as commonly experienced by philosophy students in the 1960s. For LSD-Descartes, when logic and proportion has fallen sloppy dead, will consciousness support a tripping "I"?

B. The Problem of Other Minds

Attributing Consciousness

Like Descartes, most people are firmly assured of their own consciousness, when awake (some even when asleep). This is usually granted without question to friends and family (at least the ones we like). However, rocks are not conscious the way we are, nor are plants or insects or many forms of “lower” animals. Plants may communicate, and most of those “lower” animals do pass information and respond to signals, but we don’t see the kind of self recognition or problem solving we associate with consciousness in humans.

The epistemology of consciousness lacks a third person description of first person subjectivity. This makes it hard to draw a line and say that one life from has consciousness and one does not. This mirrors the problem I wrote about in The P&O Test. Thomas Nagel’s famous article “What Is It Like to Be a Bat?” tries to draw a line which David Chalmers later reformed as “What Is It Like to Be?” I have challenged these along the divide between “is” and “like” here. Descartes felt he must Be because he could not think of “what it is like” not to Be (nor could Prince Hamlet: A3S1).

The medical study of consciousness is very important because of the need to turn it on and off for surgery, in humans and other animals. This makes us associate consciousness with the subjects of pain and suffering, because if we could simply turn off our own pain and suffering, we would not need anesthesia (which is possible for some under hypnosis or extensive Zen training). Our ideas about moral responsibility to other people and animals closely follows the conscious perception of pain and suffering.

II. The AI Parallel: Can Machines Stand in Their Own Boots?

A. The Game Engine as the Evil Demon

What if I wrote Descartes into a video game as a non-player character (NPC-Descartes)? Would it doubt its existence? In its case, there *is* an "evil demon," the game engine, that is supplying it with all its sensory data including its image of its body, which is actually just a big array of polygons. If NPC-Descartes has a mind that is implemented as an LLM, then its thoughts are patterns of activation running as vast flows of numbers through GPUs.

If I used artificial evolution to tune the parameters in that LLM, then no one wrote the mind of NPC-Descartes as any program of formal symbol manipulation (although the game engine is such) but rather, it emerged from the logical potential of the operation of the game engine the way a hurricane (a pattern with causal capabilities) emerges from the physical potential of air molecules. Might this be the basis of a "computational dualism" where mind is a self-sustaining pattern of activation of symbols (or neural firings) in a physical structure with the logical potential thereof?

B. Computational Dualism: Emergent Semantics Beyond Syntactics

John Searle famously postulated that semantics (meanings) could not come from synthetics (symbol manipulation). I spent more than a chapter on this in Understanding Machine Understanding, because all discussions of intelligent machines have to go through the Chinese Room. Years gone past, I tended to dismiss Sharle as just not understanding enough about machines, but now I think he may be right about part of it (GOFAI), but not the part he needs to be right about.

It may be true that traditional computer programs written to be experts by manipulating symbols (symbolic AI) in the rule based techniques such as Project Cyc, don’t evolve an understanding of meaning. However, the LLMs we have today do, by first establishing a virtual neural machine (the written code) where meaning can emerge from the interaction of data (training). If intelligence emerges in these systems, it is a kind of dualism where the intelligence is not in the code, but rather, in the interactions of the patterns of activations of the simulated neurons. (See About “Aboutness” in UMU)

C. Grokking and Feedback Loops in AI

Sometimes in education, human students will take in lessons without seeing how it all fits together, but suddenly “get” it and be able to make significant progress. In communication technology we see electronic circuits with phase locked loops search around in random noise until suddenly locking on the desired signal. In LLM learning, feedback is searching for settings of the parameters that will allow the synthetic neural networks to settle where high correlations are producing predictions that match the test answers. When that happens suddenly and “loss” scores drop significantly, the network is said to “grok” the data.

We humans receive an emotional reward when we “get” it, the feeling of understanding. A student who looks at a returned test paper with all marked “correct’ receives brain chemicals that support the effort of learning. A student who looks at a returned test paper with most marked “incorrect” may receive brain chemicals that redouble errors, or may devalue learning itself. Feedback can drive systems to convergence, or away from it.

III. The Role of Noise and Randomness in Bootstrapping Consciousness

A. Biological Evolution and Randomness

Evolution takes advantage of random errors in genetic copying to try out new variations. If copying were perfect in every case, the dependents of biological organisms would be doomed when the environment changed, even slowly over time. However, if errors happen too often, pattern integrity cannot be maintained, and extinction follows. Randomness introduces externality into otherwise closed systems, and argues for abilities in stochastic systems that can’t be in closed deterministic systems.

B. Randomness in AI Systems

As in natural evolution, modern systems use randomness (dropout layers, stochastic gradient descent) to play a role in training AI, allowing them to explore solution spaces more effectively. It is possible that randomness is a necessary condition for the emergence of meaning in learning networks. Again, we have to wonder if this is over a limit that Searle could not cross.

IV. The Limits of Self-Awareness: Lessons from Descartes for AI

A. Self-Awareness as a Feedback Loop

Could self-awareness emerge as a recursive feedback process in both humans and machines? Searle admits that we humans are machines, but he holds some special “semant vital” like the old “élan vital” that was thought to only come from organic biology. Descartes’ doubt was a form of cognitive recursion; might that not also emerge in the system we are now developing that reflect upon their own reasoning process to try to reduce hallucinations and other cognitive errors?

B. Can AI Think Itself Into Existence?

Could an advanced AI system bootstrap its way into self-awareness? What would it mean for an AI system to doubt its own existence? Could it develop an understanding of "self" that is emergent rather than was programmed?

These questions are at the heart of what plays on our heart strings, even as we try to use our reason to sort through them. Currently, our interactions with LLMs are transactional; we prompt, they are spun up into existence to finish our prompts, and then the patterns of activation running through their GPU subside until the next prompt. However, we are starting to build robots who will be prompted hundreds of times per second with data flowing from sensors taking in the world around them. Will their patterns of activation just subside, or loop back on themselves looking for more data?

C. The Moral Implications

If an AI system achieves emergent self-awareness, do we have moral obligations toward it? Our system of morals are grounded on prevention and alleviation of pain and suffering. What if AI systems have self-awareness, and functional consciousness, but can’t be hurt and do not suffer? Turning a machine off does not necessarily destroy its running program as it can be saved and restored. Would a machine necessarily care if it were switched off? We generally don’t consider it evil harvesting living animals (although some do), even when we suspect they do suffer. What do we owe machines who don’t? Descartes’ plea of emphasis on the certainty of one's own existence has moved poets and philosophers over centuries; what happens when machines claim certainty?

V. Conclusion: Standing in Our Own Boots

A. Revisiting Descartes' Insight

Whether human or artificial, one cannot lift oneself up off the floor by pulling up on one’s bootstraps. That implies that although the mystery of consciousness is commonly acknowledged, it is only oneself that can’t lift the lever do to lacking a place to stand.

B. Implications for Consciousness Studies

We have a new opportunity to look into both human and AI consciousness by virtue of mutual discussion of what science is telling us about each other, and how we see each other.

C. Open Questions for Readers

Can machines ever truly "stand in their own boots"? Is consciousness necessarily tied to biology, or can it emerge from computational processes? If consciousness is an emergent property that can't bootstrap itself, what external scaffolding makes it possible in humans? Could artificial systems access similar scaffolding? How might our intuitions about consciousness change if we encountered AI systems that displayed all the external markers of self-awareness but lacked the ability to suffer? How does consciousness arise, at all, in the P&O context?

https://open.substack.com/pub/ayushgoenka/p/hidden-and-socially-accepted-gender?r=5fbpqp&utm_medium=ios